According to Google .., their crawler for indexing purpose (GoogleBot) do respect the robots.txt content..

If we happened to specify something in robots.txt .. such as of

Disallow: /restrict_folder/

Its crawler then will respect this directive.. and will not crawl whatever inside /restrict_folder/ ..

somehow some other crawler might not respect this directive though..

so Google recommend us to protect our .. not so public page with a password or some sort of authentication ..

Ok.. but if you don’t have robots.txt defined.. or robots.txt is just allowing .. no restricting to folder..

Only then GoogleBot will crawl the page and read its meta directive..

and depending on the instruction at meta for robots.. it might index, archive .. or not archiving based on META tag directive..

If everything is okay.. It will then archive, index and all sort of thing that can be done for searching purpose.

then come the canonical directive in META tag for robot..

what does this one define is…

if the page happened to have two different link pointing to it but displaying same content..

using this directive.. we can define which one to be indexed..

example :

1. https://www.namran.com/2009/05/19/instructing-googlebot-using-robotstxt

2. https://www.namran.com/2009/05/19/instructing-googlebot-using-robotstxt#comment

both link are pointing to the same page.. and we prefer its to index the first one only..

instead of both two page..

we can then write the canonical as

<link rel='canonical' href='https://www.namran.com/instructing-googlebot-using-robotstxt'>

more detail can be found here.

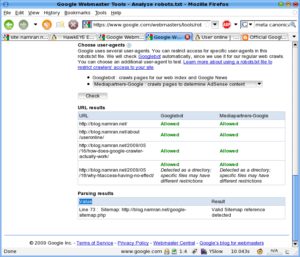

.. and to examine you robots setting..

1. login to Google webmaster tool

2. click to Tools at the left menu.

3. the can see “Analyze robots.txt”

my link would be something like .. https://www.google.com/webmasters/tools/robots?siteUrl=https%3A%2F%2Fwww.namran.com%2F&hl=en

this one can test if the robots.txt is properly written.. and either it is blocking crawler to access certain page or not..

just fill in the desired URL into the box provided.. you will be able to see its analyze..

something like this..

p/s : still can’t understand why my recent post can’t be archived/indexed ..though.. since 10th May 2009… can’t recall why.. *sigh*